Scientists have now revealed the first attack strategy that can fool industry-standard autonomous vehicle LiDAR sensors into believing nearby objects are closer or further than they actually are, new findings that could lead ways to defend these machines against such attacks.

A major challenge that researchers developing autonomous vehicles worry about is protecting them against attacks. One common security strategy involves checking measurements from separate sensors against each other to make sure their data make sense together so that an attack cannot easily fool an autonomous vehicle into seeing the world incorrectly — for example, into not seeing a stop light or a pedestrian.

A common sensing strategy used in today’s autonomous vehicles combines 2-D data from cameras and 3-D data from LiDAR. This has proven very robust against a wide range of attacks — until now.

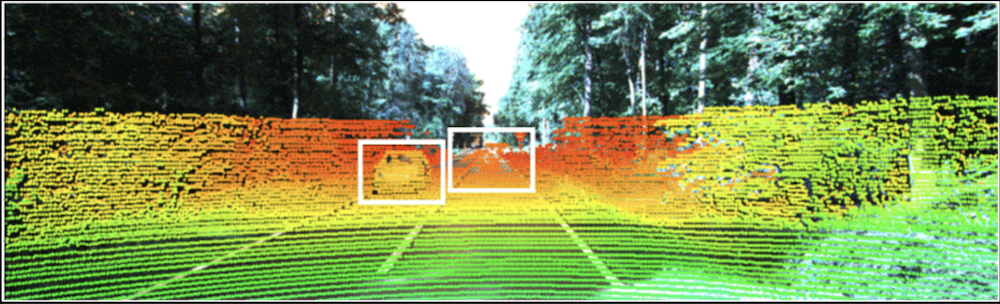

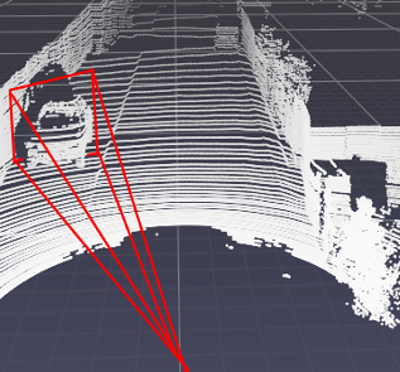

The new attack strategy works by shooting a laser beam into a car’s LiDAR sensor to add false data points to its perception. Previous research found that if those data points are wildly out of place with what a car’s camera is seeing, an autonomous driving system can normally recognize the attack. However, the new research reveals that 3D LiDAR data points carefully placed within a certain area of a camera’s 2D field of view can fool the system.

“Our goal is to understand the limitations of existing systems so that we can protect against attacks,” Miroslav Pajic, an associate professor of electrical and computer engineering at Duke University in Durham, North Carolina, said in a statement. “This research shows how adding just a few data points in the 3D point cloud ahead or behind of where an object actually is can confuse these systems into making dangerous decisions.”

This vulnerable area stretches out in front of a camera’s lens in the shape of a frustum — a 3-D pyramid with its tip sliced off. In the case of a forward-facing camera mounted on a car, this means that a few data points placed in front of or behind another nearby car can shift the system’s perception of it by several yards.

“This so-called ‘frustum attack’ can fool adaptive cruise control into thinking a vehicle is slowing down or speeding up,” Pajic said in a statement. “And by the time the system can figure out there’s an issue, there will be no way to avoid hitting the car without aggressive maneuvers that could create even more problems.”

Admittedly, there is not much risk of somebody taking the time to set up lasers on a car or roadside object to trick individual vehicles passing by on the highway. However, this risk increases tremendously in military situations where single vehicles can be very high-value targets. And if attackers could find a way of creating these false data points virtually instead of requiring physical lasers, many vehicles could be attacked at once, Pajic and his colleagues noted.

The way to protect against these attacks is added redundancy, Pajic said. For example, if cars had “stereo cameras” with overlapping fields of view, they could better estimate distances and notice LiDAR data that does not match their perception.

“Stereo cameras are more likely to be a reliable consistency check, though no software has been sufficiently validated for how to determine if the LiDAR-stereo camera data are consistent or what to do if it is found they are inconsistent,” Spencer Hallyburton, a doctoral candidate in Pajic’s Cyber-Physical Systems Lab and the lead author of the study, said in a statement. “Also, perfectly securing the entire vehicle would require multiple sets of stereo cameras around its entire body to provide 100% coverage.”

Another option, Pajic suggested, was to develop systems in which cars within close proximity to one another share some of their data. Physical attacks are not likely to be able to affect many cars at once, and because different brands of cars may have different operating systems, a cyberattack is not likely to be able to hit all cars with a single blow.

“With all of the work that is going on in this field, we will be able to build systems that you can trust your life with,” Pajic said in a statement. It might take more than 10 years, “but I’m confident that we will get there,” he added.

The scientists will detail their findings in August at the 2022 USENIX Security Symposium.